Career

Discover how professionals are growing with Lablup

Apr 29, 2026

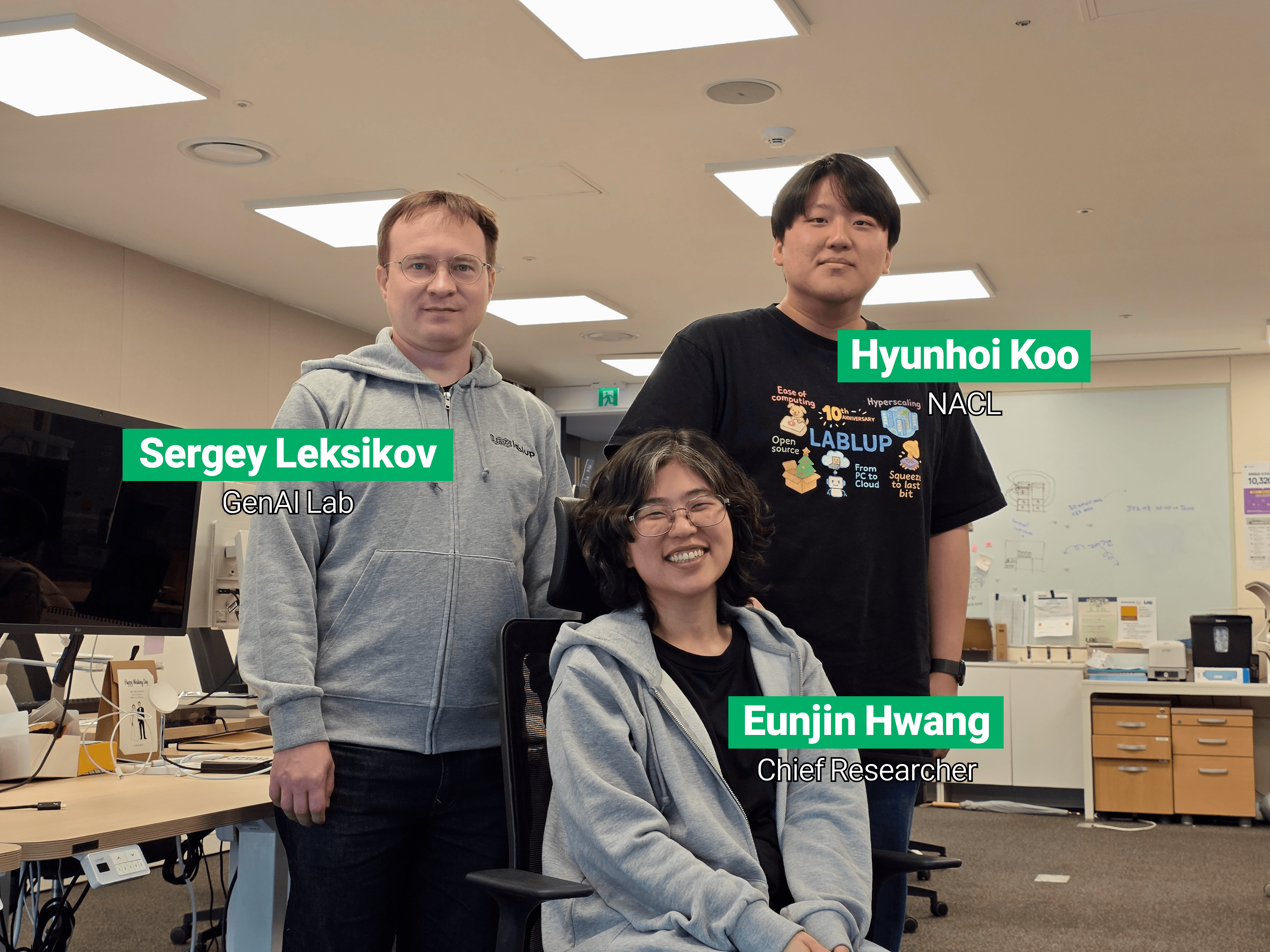

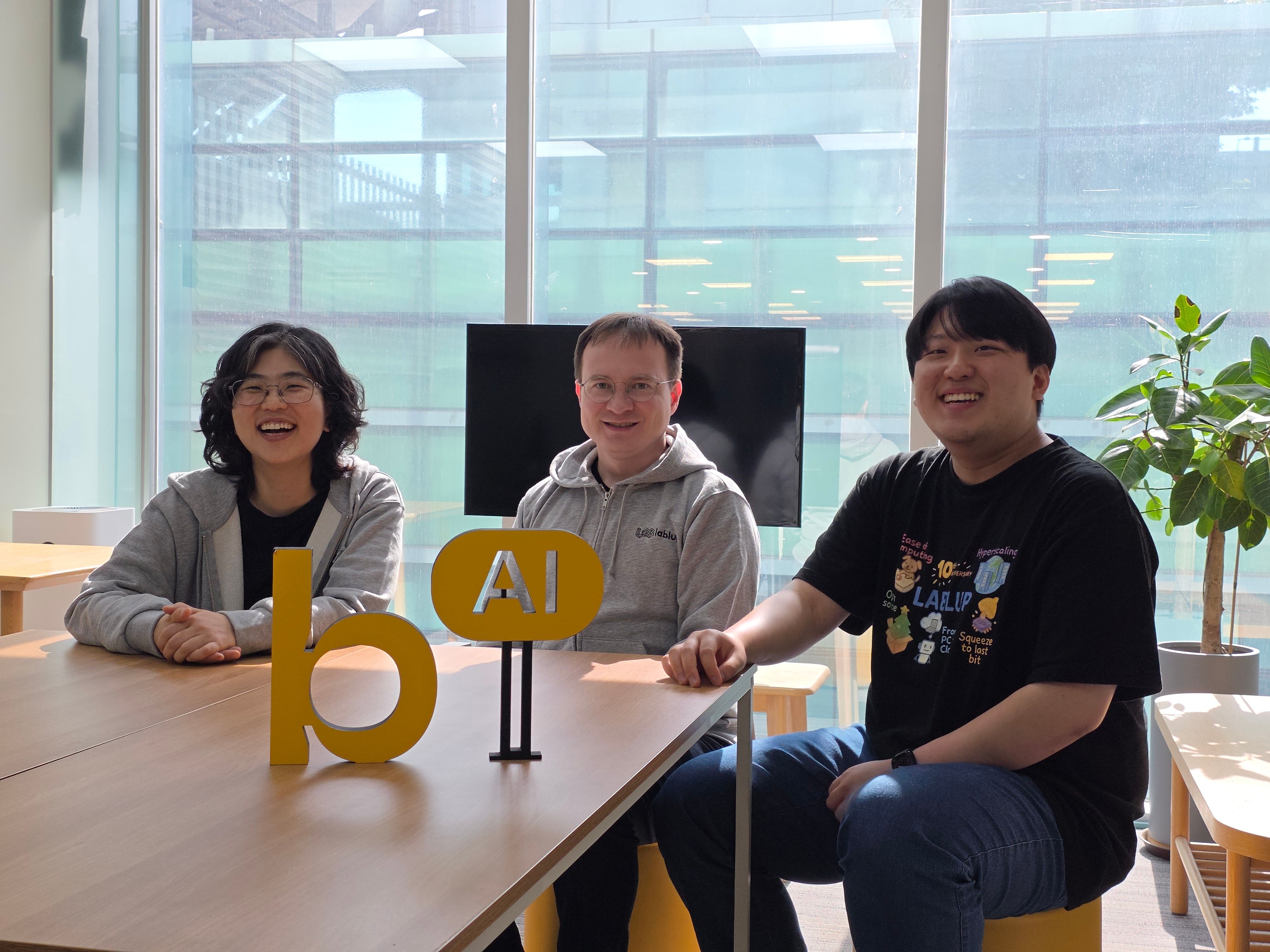

Charting what's next, today | Eunjin Hwang, Hyunhoi Koo, Sergey Leksikov

Soyeong LimTechnical Content Marketer

Soyeong LimTechnical Content Marketer Jinho HeoTechnical Writer

Jinho HeoTechnical Writer

Apr 29, 2026

Career

Charting what's next, today | Eunjin Hwang, Hyunhoi Koo, Sergey Leksikov

Soyeong LimTechnical Content Marketer

Soyeong LimTechnical Content Marketer Jinho HeoTechnical Writer

Jinho HeoTechnical Writer

At Lablup, there is a team whose work does not land in the current product but shapes what comes next. The Research team looks six months, or even one or two years, ahead of the tools and capabilities Backend.AI may need. Across three labs (GenAI Lab, Model & Data Lab, and NACL), each researcher takes on a different topic, and together they bring those topics into a shared direction. A Principal Researcher with a PhD in physics, a junior researcher who returned to Korea after working at a trading firm in the Netherlands, and a researcher from Russia who finished his master's at KAIST before joining Lablup. We sat down with the three of them to talk about what they are building and how the team comes together.

Q. Could you introduce yourselves?

Eunjin | Hello, I am Eunjin Hwang, and I lead the Research team at Lablup as a Principal Researcher. Lablup is my first company. I spent most of my early career in academia, finished my PhD, and then did a postdoctoral fellowship at KIST before moving to Lablup. My PhD is in physics, specifically in a branch of statistical physics called complex systems, which studies how many different components interact to create complex phenomena. Typical examples in complex systems of physics include economic systems, social phenomena, and brain and cognitive functions. During my PhD, I worked on classifying and predicting brain states from EEG (electroencephalogram) signals. Spending so much time on natural intelligence and cognition made me curious about artificial intelligence as well, and that is how I ended up joining the Research team at Lablup.

Hyunhoi | Hello, I am Hyunhoi Koo, and I work in NACL (Next Generation Accelerated Computing Lab) on the Research team. Before joining Lablup, I did an integrated bachelor's and master's program at Imperial College London. During that time, I interned at Google for three months and at Citadel Securities for six months. After graduating, I moved to the Netherlands and worked at IMC Trading, a proprietary trading firm, for about two years. I recently came back to Korea and joined Lablup through the Technical Research Personnel program. AI infrastructure is new territory for me, but I am really enjoying the work.

Sergey | I am Sergey Leksikov. Before joining Lablup in 2020, I did my master's in Knowledge Service Engineering at KAIST, and after graduating I spent about three years as a data scientist at a fintech startup working with ESG (Environmental, Social, and Governance) data. Right now, I am in the GenAI lab on the Research team, building applications on top of generative AI.

Q. What made each of you decide to join Lablup?

Eunjin | It came together without a formal application. What Lablup was looking for and where my career stood after my postdoc happened to line up. Lablup was planning to set up its corporate research institute and needed PhD-level researchers, and I had been collaborating with Lablup on a project during my postdoc at KIST. When the KIST contract ended, the project still needed to continue. Moving over to Lablup while contributing to the new research institute was a natural fit. That was March 2017, so I was essentially the first hire outside the founders.

Hyunhoi | I was working in high-frequency trading at IMC Trading in the Netherlands. It is a field where trade speed determines everything, so the technical bar on cutting latency is extremely high. I became curious about the AI industry, and when I looked into it, I realized that for AI training and inference, the key metric is not latency but throughput, the number of operations per unit of time. Both fields deal with performance, but the specific thing I was measuring is completely different, and the contrast was actually interesting to me. Around the time I was moving back to Korea, an opportunity to join Lablup through the Technical Research Personnel program came up, so I took it.

Sergey | In 2020, I looked through Lablup and Backend.AI's webpage, and I also checked the LinkedIn profiles of people working there including Joongi and Jeongkyu. I could see they were genuine experts in their fields, with experience that ran from teaching as professors to hands-on work across hardware and software. The senior members of the team were engineers and technologists rather than business or management people, which I really liked. The code repository that I checked through GitHub looked well organized, and I wanted to work with experts in this area. The company was already using English as its working language and had plans to expand globally. I really wanted to work here for these reasons.

Q. How is the Research team organized, and what is each lab working on?

Eunjin | The Research team is made up of three labs. The GenAI lab builds different applications on top of generative AI. The Model & Data lab develops our own language models, including Korean language models. And NACL, the Next Generation Accelerated Computing Lab, looks ahead at new features that could go into Backend.AI and carries them through to implementation. We do not think the whole team needs to move in one direction, so the three labs take on very different topics. We encourage people in our team to bring ideas from the bottom up. There is top-down work too, some of it driven by customer requests, but the way each lab runs is closer to setting up guardrails, so each person can carry their own topic.

Eunjin | This structure comes from my own experience. My postdoc advisor was great at this. His lab worked on computational neuroscience, but the researchers were on completely different topics: sleep, epilepsy, and dementia. He was the one who wove them into a single story for the outside world. When I thought about what the Research team at Lablup should look like, the image came back to me. Honestly, I am not in a position to tell this team what to do. (laughs) The people on this team have far more expertise in their own areas than I do, and they could lead anywhere. My job is to set the stage so they can bring their ideas to life and to connect them with the resources they need.

Q. What project are each of you most focused on right now?

Sergey | Right now, I am focused on a RAG (Retrieval-Augmented Generation) agent. Our APC (AI Platform and Consulting) team and GTM (Go-To-Market) team meet a lot of clients, and they needed a better way to handle customer support efficiently. The Research team decided to take on that internal problem. We have a knowledge base built from past customer questions and answers, and each product's documentation is on GitHub. If the AI agent can read through all of that and give clients a trustworthy answer grounded in our own materials, that helps each team directly. Once we started building it, we saw that the data is large and varied, so I read the latest papers and check recent use cases on X (formerly Twitter) regularly, and I try approaches that might improve efficiency and accuracy in our own setting.

Hyunhoi | My main project right now is Lagrange, an early-stage research project in NACL that integrates Kubernetes with Backend.AI. The name comes from orbital mechanics. Backend.AI is one large celestial body, Kubernetes is another, and Lagrange is the module that sits between them like a space station. A Lagrange point is where the gravitational forces of two bodies balance, and we borrowed the name from there. Kubernetes was originally closer to an orchestrator for microservices and other web workloads. As AI workloads grew, with long-running training and inference jobs that needed a stable place to live, Kubernetes took on a wider role. Today it feels less like a pure orchestrator and more like an infrastructure OS. From our side, Kubernetes is no longer a competitor. It is a platform we can work alongside. Lagrange's approach is to sync the state of a Backend.AI cluster into Kubernetes and show a mirrored version on the Kubernetes side.

Q. How does a project run day to day? How does collaboration inside the team, and communication with other teams, flow?

Hyunhoi | Daemyung Kang and I work closely on the Lagrange project. I am about three years into my career, while Daemyung has more than twenty years of experience as a developer, so he is both a teammate and someone close to a mentor. Inside the team, we hold a short daily stand-up of about fifteen minutes at a set time. Beyond that, we rely heavily on asynchronous communication. In async communication, there is a separation, a decoupling, between when a message is sent and when it is received, which means we have to write carefully and precisely. We use that kind of written context to keep our shared understanding sharp.

Eunjin | Inside the Research team, we hold a weekly sync-up meeting to share progress. This month, for the first time, we held what we are calling a Research Team Seminar Day, where the research team presented our work to the wider company. We plan to make it a regular format, both to share our research and to collect feedback from other teams. Beyond that, communication with other teams happens on Teams channels as needed. Since the Research team does not sit at the customer interface, we stay in touch with the Sales and GTM teams regularly to check whether their read of what customers want matches the direction our research is heading.

Hyunhoi | For NACL specifically, we meet with the CoreDev team every two weeks to line up the direction for whatever comes next after Lagrange. We talk through what we are considering, where it matches customer requests, where it does not, and where we should adjust. It has become a regular exchange.

Q. Was there a recent moment where you felt you grew the most?

Sergey | The HS code classification project is what comes to mind. That kind of work usually gets split across a data engineer, an ML engineer, and a data analyst, but I ended up leading the whole thing myself, with some help from interns. Designing and building the entire pipeline from end to end was a clear step for me.

Eunjin | The first time I led a government research project, back in 2021. It was called AI Convergence for Emerging Infectious Disease Response, and within it I was responsible for a subproject analyzing parameters of disease transmission. At the proposal stage, I was the only person available to take on the project lead role. Fortunately, Sergey did excellent modeling work, and the other institutions in our consortium stepped in when we needed them, so we closed out the three-year project cleanly. That experience taught me that what feels impossible starts to work once you actually start, and that a role can shape the person filling it. I grew a lot from it.

Eunjin | The Dokpamo (Independent AI Foundation Model) project was another big growth moment for me. Watching the CoreDev team handle large-scale cluster operations from the side, the overall structure of our own product started to click. Before that, I only used Backend.AI as a user, so if the Jupyter Notebook launched, I did not have to worry about how the pieces fit together behind it. In the past, if something went wrong, I would say something like, "the session menu in our R&D cloud is not loading." Now I can say something more specific: "looks like the NFS mount (the network storage that holds models, data, and code) has disconnected, could someone take a look?" It sounds like a small thing, but it was a meaningful change for me.

Q. Has there been a project that went in an unexpected direction, or that you would describe as a failure?

Hyunhoi | Lagrange itself is a good example. Our starting assumption was that customers would strongly want to see Backend.AI's detailed workload and node state inside Kubernetes, one-to-one. That is why we designed it to mirror the state of a Backend.AI cluster into Kubernetes. Recently, when we synced with other teams, we learned that this specific demand from customers is smaller than we had expected. If that is the case, workload-level one-to-one mirroring might not be the best path. Lately, we are also looking at a different direction where Backend.AI runs on top of Kubernetes as a platform but keeps its orchestration role independent. I am continuing to work on Lagrange, and Daemyung is exploring the feasibility of the new direction.

Eunjin | When an unexpected shift happens, our approach is to talk to the other teams who know the area well and search for the best path together. Even if it means rewriting the original direction, if that turns out to be the better choice, we prefer to adjust quickly.

Q. What do you hope the Research team will look like one or two years from now?

Eunjin | Personally, I would love the Research team to become a team that can take on areas we have not explored yet. Domains like physical AI or agents that Backend.AI has not yet been shaped around. Improving what we already do well is something the CoreDev team handles excellently. What I would rather do on our side is look a step further out. If we can surface use cases that Backend.AI was not originally designed for, that would be exciting.

Hyunhoi | These days, it is hard to predict the future with any confidence. The industry is moving at an almost absurd pace, and what we think will matter in six months might be completely different from what matters when that time comes. Therefore, I think what the Research team can do right now, in the present, is just as important as preparing for what is coming.

Q. What kind of person do you hope to join the Research team?

Eunjin | Not necessarily a specialist in a specific domain. It is more important to be someone who can surface their own ideas from the bottom up and carry them forward themselves. This is genuinely how the Research team runs. Someone who can think freely and flexibly, bring a lot of ideas to the table, and carry their own work through would be ideal.

Sergey | I hope for someone with real curiosity. Someone who enjoys learning new things, takes interest across many fields, and keeps adding to what they know. That quality matters a lot to me in a future teammate.

Hyunhoi | Two things come to mind. First, you need to be proactive. On the Research team, the ideas have to come from you: "this should happen," or "this might work." We are a bottom-up, open team, so there is almost no micromanagement. Second, you should really enjoy what you do. If you find new things exciting, you will fit well here. Even as a junior, I have been given real responsibility and room to run with projects, and the work is genuinely fun for me.

This interview was conducted on April 16, 2026.

Interviewee Eunjin Hwang, Hyunhoi Koo, Sergey Leksikov

Interviewer and Photographer Jinho Heo

Editor Soyeong Lim